AI in Healthcare: Diagnosis Revolution or Regulatory Nightmare?

Artificial intelligence is transforming healthcare diagnostics at an unprecedented pace, with the FDA approving record numbers of AI-powered medical devices in 2025. This technological revolution promises faster, more accurate diagnoses but simultaneously raises critical questions about data privacy, algorithmic bias, and regulatory oversight. As healthcare systems worldwide grapple with integrating AI into clinical workflows, the tension between innovation and patient protection has never been more pronounced.

What is AI-Assisted Diagnostics?

AI-assisted diagnostics refers to the application of artificial intelligence technologies to analyze medical data and support clinical decision-making. These systems use machine learning algorithms trained on vast datasets of medical images, electronic health records, and patient histories to identify patterns that might escape human detection. The global IoT healthcare market is projected to reach $534.3 billion by 2025, with AI diagnostics representing a significant portion of this growth. According to a 2025 meta-analysis in PLOS One, AI algorithms for detecting conditions like tooth decay have demonstrated clinical justification, marking a shift from experimental tools to validated medical devices.

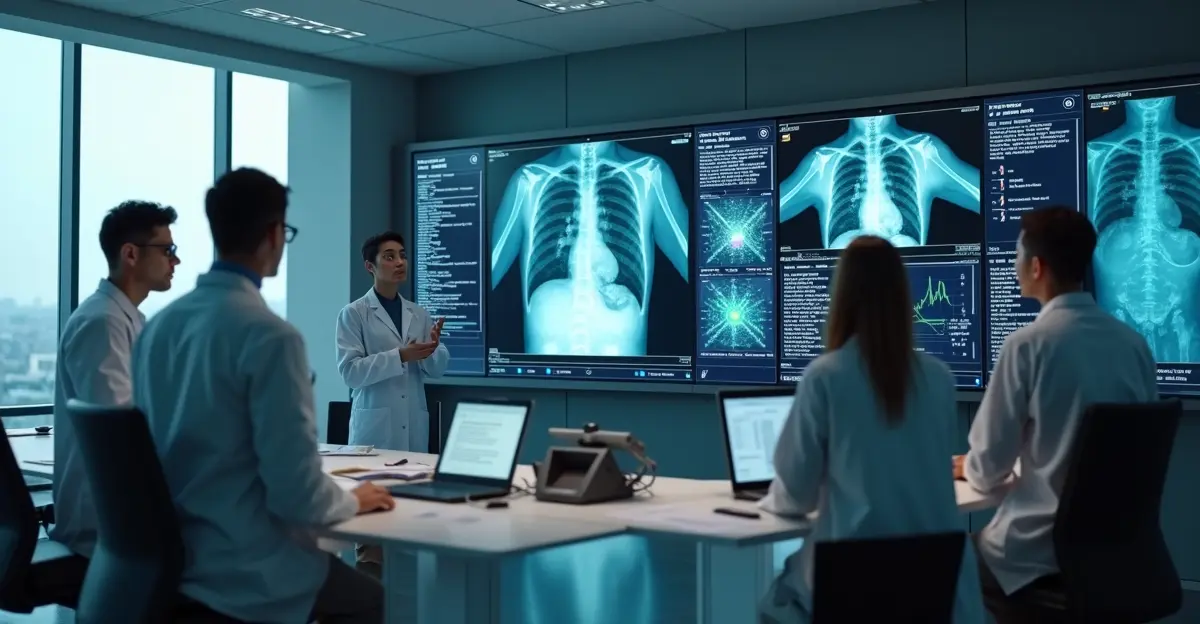

The Diagnostic Revolution: Unprecedented Accuracy and Speed

AI diagnostic tools are achieving remarkable results across multiple medical specialties. In radiology, where radiographs represent the most commonly performed imaging tests, AI systems can assist with triage and interpretation, potentially reducing diagnostic delays. These technologies analyze medical images with superhuman precision, detecting subtle anomalies in X-rays, MRIs, and CT scans that might be overlooked by human radiologists.

Real-World Applications and Success Stories

Healthcare institutions implementing AI diagnostics report significant improvements in early disease detection. For Alzheimer's disease and dementias, AI algorithms can analyze electronic health records to identify patterns predictive of cognitive decline years before symptoms manifest. The integration of natural language processing (NLP) helps standardize medical terminology across different healthcare providers, creating more consistent datasets for analysis. As noted in the Congressional Research Service report on AI in healthcare, these technologies are being applied to diagnostics, treatment planning, drug discovery, and patient monitoring with increasing frequency.

The Economic Impact of AI Diagnostics

The economic implications are substantial, with connected healthcare ecosystems estimated to create $1.60 trillion in annual economic impact by 2025 through improved efficiency and resource utilization. Chronic diseases affecting over 40% of Americans represent a particularly promising area for AI intervention, where continuous monitoring through wearables and biosensors enables early intervention and reduced hospital readmissions.

The Regulatory Nightmare: Privacy, Bias, and Compliance Challenges

Despite the promising applications, AI in healthcare faces significant regulatory hurdles. The intersection of HIPAA regulations, healthcare data, and artificial intelligence creates complex compliance requirements that many organizations struggle to navigate. As highlighted in the comprehensive 2025 review examining ethical and legal challenges, key concerns include data privacy, algorithmic bias, liability for AI errors, and cross-border regulatory harmonization.

HIPAA Compliance and Data Protection

Healthcare organizations implementing AI must comply with HIPAA's three core rules: the Privacy Rule, Security Rule, and Breach Notification Rule. There is no such thing as "HIPAA certified AI"—compliance depends on deployment, configuration, documentation, and monitoring. The 18 HIPAA identifiers must be properly removed for data de-identification when training AI models, creating technical challenges for developers. The Sharp HealthCare lawsuit serves as a cautionary tale, where an AI scribe allegedly recorded patients without proper consent, demonstrating the real-world consequences of regulatory missteps.

Algorithmic Bias and Healthcare Disparities

Perhaps the most concerning regulatory challenge involves algorithmic bias potentially amplifying existing healthcare disparities. AI systems trained on historical medical data may inherit and perpetuate biases present in that data, leading to unequal diagnostic accuracy across different demographic groups. This issue intersects with broader concerns about healthcare equity and access in an increasingly automated medical landscape.

FDA Approval Landscape: Record Growth in 2025

The FDA's approval of AI-powered medical devices reached record numbers in 2025, reflecting growing regulatory acceptance of these technologies. This surge indicates that AI medical devices are becoming more sophisticated and clinically validated, moving from experimental stages to mainstream healthcare implementation. However, the approval process remains complex, requiring extensive clinical validation and post-market surveillance to ensure ongoing safety and efficacy.

Regulatory Frameworks and Global Harmonization

Different countries approach AI healthcare regulation with varying frameworks, creating challenges for global technology deployment. The European Union's Medical Device Regulation (MDR) and In Vitro Diagnostic Regulation (IVDR) impose stringent requirements that differ from FDA guidelines in the United States. This regulatory fragmentation complicates the development and deployment of AI diagnostic tools across international markets, potentially slowing innovation while increasing compliance costs.

Ethical Considerations and Patient Trust

Beyond regulatory compliance, ethical considerations loom large in the AI healthcare landscape. A 2023 systematic review found that most stakeholders—including health professionals, patients, and the general public—doubted that care involving AI could be empathetic. This trust deficit represents a significant barrier to adoption, even when technologies demonstrate clinical efficacy.

Transparency and Explainability

The "black box" nature of many AI algorithms creates transparency challenges. When an AI system recommends a particular diagnosis or treatment, healthcare providers and patients need to understand the reasoning behind that recommendation. This need for explainability conflicts with the complexity of deep learning models, creating tension between technological capability and clinical practicality.

The Future Outlook: Balancing Innovation and Protection

Looking toward 2026, the trajectory of AI in healthcare diagnostics will depend on finding the right balance between innovation acceleration and patient protection. Emerging technologies like blockchain for healthcare data security and advanced encryption methods offer potential solutions to privacy concerns, while ongoing research into algorithmic fairness aims to address bias issues.

Multidisciplinary Collaboration Required

Successful integration of AI into healthcare requires collaboration between technologists, healthcare providers, legal experts, and policymakers. As emphasized in recent analyses, flexible, globally harmonized regulatory frameworks must evolve alongside AI innovation to ensure safe and equitable healthcare systems. Public engagement will be crucial for building trust and ensuring ethical AI adoption, particularly as these technologies become more integrated into routine clinical practice.

Frequently Asked Questions

What are the main benefits of AI in healthcare diagnostics?

AI diagnostic tools offer faster, more accurate analysis of medical data, early disease detection, reduced diagnostic delays, and improved resource utilization. They can analyze patterns across vast datasets that exceed human capability, potentially identifying conditions earlier than traditional methods.

How does HIPAA apply to AI systems in healthcare?

AI systems handling protected health information must comply with HIPAA's Privacy, Security, and Breach Notification Rules. This includes proper data de-identification for training, secure data handling protocols, and appropriate business associate agreements when third-party AI vendors are involved.

What are the risks of algorithmic bias in medical AI?

Algorithmic bias occurs when AI systems trained on historical medical data inherit and perpetuate existing healthcare disparities. This can lead to unequal diagnostic accuracy across different demographic groups, potentially worsening healthcare inequities rather than alleviating them.

How many AI medical devices has the FDA approved?

The FDA approved record numbers of AI-powered medical devices in 2025, reflecting significant growth in regulatory acceptance. While exact numbers vary by classification, the trend shows accelerating approval rates as these technologies demonstrate clinical validation and safety.

Can AI replace human doctors in diagnostics?

Current consensus suggests AI will augment rather than replace human clinicians. AI excels at pattern recognition and data analysis, while human doctors provide clinical judgment, empathy, and complex decision-making that integrates multiple factors beyond pure data analysis.

Sources

Congressional Research Service Report on AI in Healthcare

2025 Review of Ethical and Legal Challenges in AI Healthcare

HIPAA Journal: Healthcare Data and Artificial Intelligence

FDA AI Medical Device Approvals Reach Record Numbers in 2025

Wikipedia: Artificial Intelligence in Healthcare

Deutsch

Deutsch

English

English

Español

Español

Français

Français

Nederlands

Nederlands

Português

Português